Now an IChemE‑approved course.

Participants will be introduced to real‑world process data challenges and how to solve them with Industrial AI and data science.

You will learn how GenAI tools (ChatGPT, Microsoft Copilot, Gemini…) support and accelerate the application of machine‑learning techniques—and when to use them.

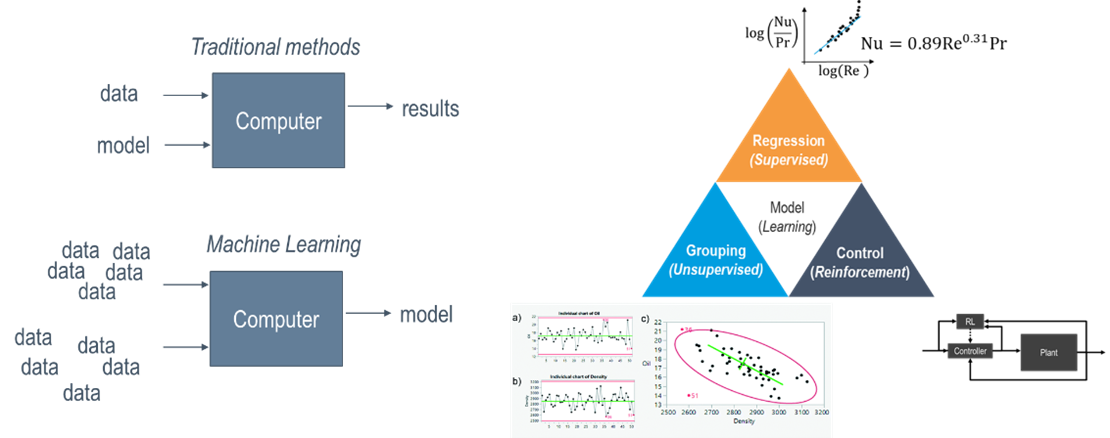

We cover essential methods for regression (supervised learning) and anomaly detection (unsupervised learning) applied to continuous and batch process data, enabling subject‑matter experts such as process engineers to work independently.

Industrial AI driven by subject‑matter experts, such as process engineers, will learn to:

- Define the problem

- Access relevant knowledge

- Extract and transform process data

- Quantify variability and detect significant process changes

- Quickly identify likely root causes to improve processes

- Plan additional experiments when needed.

The workshop is learn‑by‑doing: each day includes hands‑on exercises where you can work with your own datasets on your own laptop. Basic statistics (e.g., Six Sigma concepts) are helpful but not required. Programming background is not necessary (Python, MATLAB, etc.).

Programme:

Day 1 – Troubleshooting processes with Industrial AI and Data Science

- Industrial AI in manufacturing: what’s useful vs. hype; how GenAI supports problem framing, documentation search, and reporting.

- Core machine‑learning concepts via agentic workflows (prompting → exploratory data analysis → feature importance → quick root‑cause analysis), using the distillation tower case; apply random forest/boosted trees to relate drivers to yield.

- Industrial data landscape: tags & historians, automation pyramid (ISA‑95/88), events/batches, and ERP/LIMS joins.

- Import your own CSV/Excel for analysis; curated datasets are also provided.

Day 2 – Monitoring assets

- Introduction to analytics for batch processes: KPI definition for an industrial dryer (feature engineering) and variability tracking (visual analytics, SPC, robust statistics).

- Batch alignment using phase/event markers (e.g., time warping); FPCA vs. KPI summaries.

- Anomaly detection with PCA, KNN, autoencoders, and decision trees; identify plant changes in datasets such as the Tennessee Eastman process.

Day 3 – Modelling & decision support

- Problem definition for improvement vs. prediction; avoiding the “optimise the wrong thing” trap.

- Variable screening at scale: decision trees, bootstrap forests, boosted trees; controlling overfitting (time‑split/cut‑point).

- Explainable AI with SHAP: ranking drivers and interactions.

- Modelling tactics: missing data, Lasso, neural networks; inferential sensors & digital twins.

- Introduction to Bayesian Optimisation vs. DOE.

Dr. Francisco Navarro (Data Science Director at IFF and Visiting Researcher at Imperial College London) [in]

Chemical engineer with 15 years of experience at the intersection of Industrial AI, data science, and manufacturing. At IFF, he leads the democratization of AI and machine learning in manufacturing, enabling engineers to use industrial data science and GenAI to monitor, troubleshoot, and optimize processes at scale. His work focuses on building data literacy and self-service analytical capability across operations, allowing organizations to scale impact beyond specialist teams.

His industrial and research experience spans IFF, Solvay, P&G, and Bayer, combining data-driven methods with manufacturing systems, advanced process control, and process systems engineering. He holds a PhD in modeling and simulation, during which he designed and patented multiphase-flow sonoreactors, and he was also a visiting researcher in Professor Klavs Jensen’s lab at MIT. In 2012, he co-founded CacheMe, an open-source chemical engineering initiative based at the University of Alicante, Spain.

Mattia Vallerio PhD. (Tenure Track Assistant Professor at Politecnico di Milano)

Chemical engineer with extensive industrial leadership experience in digital transformation, advanced process control, and industrial AI within large-scale manufacturing. At Solvay (Syensqo) and BASF, he led cross-functional teams and strategic programs deploying data analytics, optimization, and smart manufacturing solutions across complex production sites. His work focused on integrating IT/OT systems, scaling advanced process control, and embedding data-driven decision-making into daily operations to deliver measurable performance and sustainability improvements.

Building on this industrial foundation, he now serves as Tenure Track Assistant Professor at Politecnico di Milano, where his research bridges Generative AI, machine learning, optimization, and optimal control for chemical processes. His work connects rigorous process systems engineering with modern AI to enable scalable, high-impact solutions for the process industry. He holds a PhD in Chemical Engineering from KU Leuven, specializing in multi-objective optimization and optimal control of (bio)chemical processes.

Carlos Perez Galvan, PhD (Senior Technical Lead – Industrial Data Science at PolyModels Hub) [in]

Carlos is a chemical engineer with broad experience in modelling, simulation, and optimisation across research, manufacturing, and the pharmaceutical industry. At PolyModels Hub, he leads customer-facing technical engagements, helping clients address complex manufacturing challenges. His work focuses on enhancing value across the different stages of pharmaceutical development through the application of systematic approaches, including data-driven and mechanistic modelling, artificial intelligence, design of experiments, and optimisation.

Previously, at Procter & Gamble, Carlos specialised in mechanistic modelling in the fast-moving consumer goods sector. At Solvay and Syensqo, he led an industrial data science team and worked closely with advanced process control specialists to tackle challenges in both continuous and batch plants, including process monitoring, soft sensing, and scheduling. Carlos holds a PhD in Chemical Engineering from UCL, where his research focused on dynamic optimisation under uncertainty.

Registration Fee:

| Industry rate | £ 1700 | (from 16 April 2026 until 29 May 2026) |

| Early bird Industry rate | £ 1400 | (until 16 April 2025) |

| Academic rate | £ 550 | (from 16 April 2026 until 29 May 2026) |

| Early bird academic rate | £ 450 | (until 16 April 2025) |

Cancellations

Substitutions may be made at any time, whilst a valid place is held. The organiser cannot accept liability for costs incurred in the event of a course having to be cancelled as a result of circumstances beyond its reasonable control.

Venue: The Sargent Centre for Process Systems Engineering

Imperial College London

Roderic Hill Building

South Kensington Campus

London SW7 2BB

For the campus website, use this link.

Closest Underground Stations are South Kensington or Gloucester Road.